In the name of Allah, most gracious and most merciful,

Table of Contents

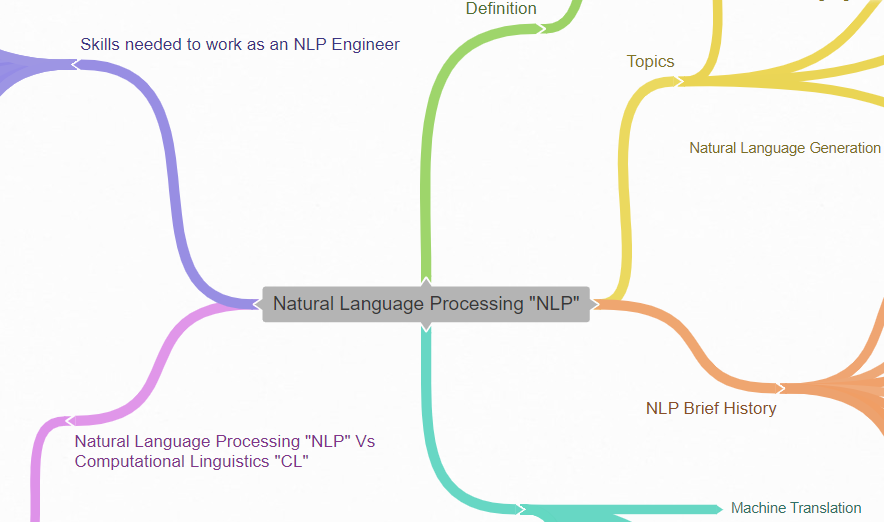

Introduction

Language is very special to humans. A big part of the advancements of humanity throughout history is the ability to effectively communicate with each other, and share what they have learned and discovered throughout ages. Moreover, language could be considered a big part of human intelligence. Language affects how we see the world. Think about it, when you think about something, what do you do? Well, you speak to yourself in your language. Therefore, the more expressive and accurate your language is, the better you could think, and the more details you could carve out during your thinking process. It is like having a great batch of thinking tools that help you divide whatever problem you come across, describe, analyze it, and finally solve it.

Natural language seems complex since it is not only about understanding some vocabulary, but it is about having background knowledge through our experiences that help us a lot in understanding the words in different contexts. So what if the computer was able to understand the human language. Well, that it is a big part of what Natural Language Processing “NLP” is.

Therefore making computers understand human language could sort of help us understand how the brain works.

1. Definition

Natural Language Processing came as a combination of computer science (including Artificial Intelligence), and linguistics with the aim of making computers understand the human language, and to analyze huge amounts of natural language data for building useful applications.

In short NLP is about turning text “unstructured data” into structured data so that we could benefit from in different applications.

Processing and understanding language isn’t simple since human knowledge and a combination of Language, Linguistics, Cognitive Science, Data Science, and Computer Science, and more are required. Therefore it is not just about having a very large vocabulary on which you want to train the machine on it. Human language is really mysterious.

By the way, engineering could be thought of as restructuring what is out there in a suitable format using physics and mathematics so that the new structure is very useful and easy to benefit from. For instance, any mechanical device could be considered as reorganization and integration of several parts together in a way to be useful like cars, airplanes, etc.

Engineering Insight

2. Topics

2.1 Speech Processing

2.1.1 Speech Recognition

It is recognizing speech. Converting speech into text that could then be processed to get insights from text or to do one of the different applications that we will see later in the Applications of NLP section.

2.1.2 Text to Speech

Converting text to speech

2.2 Natural Language Understanding (NLU) (includes Information Extraction “IE”)

It is an NLP subfield that is concerned with how machines could understand, and analyze unstructured data. It converts human language unstructured data into structured data that the computer could understand. This understanding is based on grammar and context.

Natural Language Understanding could be performed using syntactic analysis and semantic analysis for breaking down the natural language into machine-readable and understandable chunks.

2.2.1 Syntactic Analysis “Parsing or Syntax Analysis”

Identify the syntactic structure of the text for knowing the relationship between words. In other words, understand the text grammar.

2.2.2 Semantic analysis

Trying to understand the meaning of text through analyzing the sentences’ structures, and word interactions. In other words, understand the text’s literal meaning.

2.2.3 Pragmatic analysis

Understanding the intend of the text or the true meaning of it.

2.3 Natural Language Generation (NLG) “Speech Synthesis”

In simple words, based on structured data that the machine has understood, it generates text. So before generating text it needed to first understand it.

What you Expect to Learn in Details (Natural Language Processing with Python Textbook Table of Contents)

- Language Processing and Python (includes Automatic Natural Language Understanding)

- Accessing Text Corpora and Lexical Resources

- Processing Raw Text

- Writing Structured Programs

- Categorizing and Tagging Words

- Learning to Classify Text

- Extracting Information from Text

- Analyzing Sentence Structure

- Building Feature-Based Grammars

- Analyzing the Meaning of Sentences (includes Natural Language Understanding)

- Managing Linguistic Data

3. NLP Brief History

1950s

The Turing Test: An intelligence criterion proposed by Professor Alan Turing in an article he has published titled “Computing Machinery and Intelligence”. If the machine could imitate a human conversation with no noticeable difference then the machine could be considered to be capable of thinking.

1952

The Hodgkin-Huxley model showed how neurons are formed in the brain for building an electrical network which then inspired the idea of Artificial Intelligence “AI”, and NLP.

1957

Professor Noam Chomsky revolutionized previous linguistics concepts mentioning that the sentence structure should be changed for computers to understand the human language.

He created the Phase-Structure Grammar Style which translated natural language sentences into computer usable format for imitating the human brain thinking and communication.

1958 – 1966

After many trials AI and NLP research was considered to be dead by many people.

1980s

Until 1980s most of NLP was based on complex hand-written rules. Then in the late 1980s NLP revolution came as a combination of increased computational power (Moore’s law), adopting machine learning algorithms in NLP, and the gradual decrease of Chomskyan linguistics theories dominance which discouraged corpus linguistics. Moreover, IBM was responsible for developing many successful complicated statistical NLP models.

This was due to both the steady increase in computational power (see Moore’s law) and the gradual lessening of the dominance of Chomskyan theories of linguistics (e.g. transformational grammar), whose theoretical underpinnings discouraged the sort of corpus linguistics that underlies the machine-learning approach to language processing.[6]

1990s

NLP Statistical models became more popular like N-Grams, and then LSTM recurrent neural net (RNN) models were introduces in 1997.

2000s

Research increased its focus on semi-supervised, and unsupervised machine learning algorithms to benefit from the increasing World Wide Web unstructured or unlabeled data like text, images, etc… If you don’t understand the meaning of semi-supervised, unsupervised, and structured data, you could refer to this machine learning exploration post.

2010s – present

Representation machine learning methods (like those in deep neural networks) became widely used in NLP which could achieve state-of-the-art performance and results in many NLP tasks.

4. NLP Applications

- Machine Translation

- Chatbots

- Text Summarization

- Understanding Human Language Production

- Monologic Discourses Extended Planning

- Business Intelligence Dashboard Analysis

- Business Data/Data Analysis Reporting

- Reporting IoT Maintenance and Device Status

- Summarize Client Financial Portfolio

- Personalized Communications with Customers

- Email Filtering

- Psycholinguistics Modeling

- Automatic Ticket Routing

- Automated Reasoning

- Question Answering

- Social Media Monitoring

- Survey Analysis

- Targeted Advertising

- Hiring and Recruitment

- Voice Assistants

- Grammar Checkers

- Topic Modeling (Identifying Text Topic)

- Named Entity Recognition (NER)

5. Natural Language Processing “NLP” Vs Computational Linguistics “CL”

Although there is a subtle difference between these two terms, they are used interchangeably. CL is more concerned with research and theory, while NLP is more concerned with the practical side of building applications.

From my observation in LinkedIn profiles, I found that Computational Linguists originally graduated as Linguists then they take programming and computations as supplementary. It is kind of they are majored in Linguistics, while their minor is engineering. However, NLP Engineers are originally graduated from computer science, computer engineering, or any other engineering-related discipline. Therefore, they are originally engineers “Engineering is their major” while, it is preferable for them to know more about linguistics since NLP is an interdisciplinary field between Computer Science (AI, …) and Linguistics.

6. Skills needed to work as an NLP Engineer

Since Natural Language Processing is a Machine Learning subfield, then the skills required are the same as Machine Learning Engineer skills written in this post while adding to them the following skills. Of course, the weight of different skills will vary depending on which field you are in (Machine Learning in general, NLP, or Computer Vision for instance).

Not all these skills are required to start a job as an NLP engineer. It differs depending on the needs of the company you are applying for, its needs, and your exact role in it.

- Understand text representation techniques (N-grams, BoW “Bag of Words”, TF-IDF, Word2Vec, …)

- Understand semantic extraction techniques

- Text classification, and clustering

- Deep Learning (Feed Forward Neural Networks “FFNN”, RNN “Recurrent Neural Networks”, CNN “Convolutional Neural Networks”, …)

- Syntactic, and Semantic parsing

- Linguistic Knowledge (Preferable)

- Understand compilers

- Machine Learning Libraries (Ex: scikit-learn)

- Machine Learning Frameworks (Ex: TensorFlow, Keras, PyTorch, …)

- Familiarity with Big Data Frameworks (Ex: Hadoop, Spark, etc …)

- CI/CD (Continuous Integration/Continuous Delivery) Pipelines

Possible Useful Knowledge

- Ontology Engineering: It is concerned with methods and methodologies for building ontologies. Ontologies are formal representations of a certain domain set of concepts and the relationship between those concepts.

- Knowledge Graphs

Finally

Thank you. I hope this post has been beneficial to you. I would appreciate any comments if anyone needed more clarifications or if anyone has seen something wrong in what I have written in order to modify it, and I would also appreciate any possible enhancements or suggestions. We are humans, and mistakes are expected from us, but we could also minimize those mistakes by learning from them and by seeking to improve what we do and how we do it.

Allah bless our master Muhammad and his family.

References

https://en.wikipedia.org/wiki/Natural_language_processing

https://en.wikipedia.org/wiki/Outline_of_natural_language_processing

https://www.dataversity.net/a-brief-history-of-natural-language-processing-nlp/

https://towardsdatascience.com/nlp-101-what-is-natural-language-processing-b4a968a3b7bf

https://monkeylearn.com/natural-language-processing/

https://medium.com/sciforce/a-comprehensive-guide-to-natural-language-generation-dd63a4b6e548

https://medium.com/sciforce/nlp-vs-nlu-from-understanding-a-language-to-its-processing-1bf1f62453c1

https://monkeylearn.com/blog/natural-language-understanding/

https://www.analyticsvidhya.com/blog/2020/07/top-10-applications-of-natural-language-processing-nlp/

https://www.freelancermap.com/blog/what-does-nlp-engineer-do/

https://resources.workable.com/natural-language-processing-engineer-job-description

https://www.udacity.com/blog/2020/08/the-best-nlp-books.html

https://www.amazon.com/Natural-Language-Processing-Python-Analyzing/dp/0596516495